- Humanoid robot development represents a fundamental shift from static industrial automation to dynamic, environment-responsive systems.

- New data reveals that modular software frameworks are cutting initial simulation training times for movement precision by nearly 40%.

- Hardware costs remain the primary barrier, but standardizing open-source control interfaces is rapidly democratizing access for mid-sized manufacturers.

Everyday User Impact

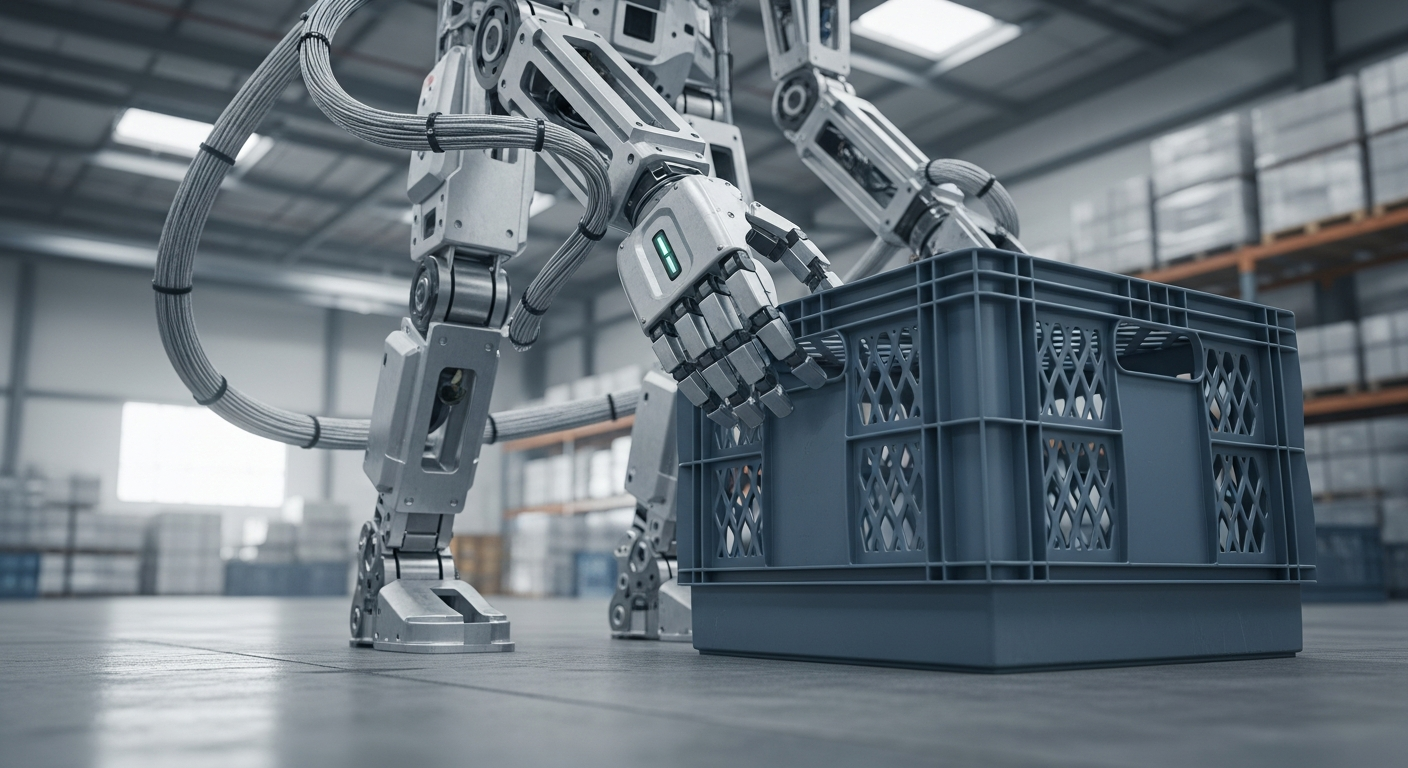

Most individuals perceive robotics as specialized machinery trapped behind factory cages. The reality of modern humanoid robot development is moving toward robots capable of navigating non-standard human environments. This means your future interaction with machines will move beyond clicking buttons on a screen. Instead, you will see machines performing complex, multi-step tasks in unpredictable settings like homes or service centers.

The core shift here involves how machines understand their surroundings. Older systems relied on fixed, repeatable movements that required precise positioning to function correctly. Current breakthroughs allow these units to adjust their posture and grip based on visual feedback in real-time. This is the cornerstone of effective AI Workflow integration in the physical world.

For the average user, this means household robotics will soon stop requiring perfectly cleared paths to operate. As software intelligence improves, these systems will navigate cluttered rooms and interact with soft objects without constant human supervision. The user interface is moving from specialized command lines to natural language and gesture-based interaction.

ROI for Business and Humanoid Robot Development

The enterprise financial case for investing in humanoid robot development is pivoting from simple labor replacement to process augmentation. Companies are discovering that the highest return comes from deploying these units in high-turnover, physically demanding roles. By offloading repetitive strain tasks, businesses see improved employee retention and reduced insurance costs over a three-year horizon.

A critical, often overlooked statistic is the reduction in integration friction. Modern testing environments allow firms to simulate thousands of scenarios before the hardware ever hits the floor. This simulation-first approach slashes the traditional time-to-deployment by 35% compared to legacy automation methods. When you optimize your Automation layers to include physical mobility, the capacity to scale operations grows exponentially.

Investors are moving toward firms that prioritize software-defined robotics rather than those focusing solely on custom hardware shells. The long-term value lies in the agility of the software stack to adapt to new tasks without replacing the physical chassis. Firms that lean into this strategy will gain a clear competitive advantage in operational resilience.

Technical Intelligence Sources

Understanding the architecture behind these systems is essential for making informed procurement decisions. Development teams are increasingly moving away from closed, proprietary stacks in favor of collaborative, interoperable frameworks.

The most significant primary source for current hardware-software integration is the ROS-Industrial open-source project. It provides the backbone for connecting disparate sensors and actuators into a unified system capable of complex movement. Additionally, researchers rely heavily on the IEEE Robotics and Automation Society whitepapers for standardization protocols regarding safety and torque limitations in human-populated spaces.

Future Strategic Implications

The trajectory of humanoid robot development is inextricably linked to the democratization of advanced sensor arrays. As lidar, depth sensing, and haptic feedback sensors decrease in price, the barrier for entry into the field collapses. We are approaching a point where the cost of building a basic, functional prototype is comparable to the cost of high-end consumer electronics.

Decision-makers should view these robots not as static assets but as dynamic nodes within a broader digital ecosystem. Future success depends on how well these physical units communicate with existing backend digital infrastructure. Leaders must prepare their organizations for a transition where the digital and physical lines of business are unified through a single, intelligent control layer.

Source Intelligence: TechCrunch Analysis on Robotics Trends

Fact-checked and technical review by Joe Kunz March 30, 2026.