- The strategic shift toward custom silicon is real: Big Tech is moving away from generic GPUs to optimized hardware to control costs.

- Amazon Trainium chips are now the primary engine for training multi-modal models at scale, marking a departure from traditional industry reliance on Nvidia.

- Performance metrics reveal a 40% reduction in latency for large-scale inference tasks, proving that vertical integration is the new competitive moat.

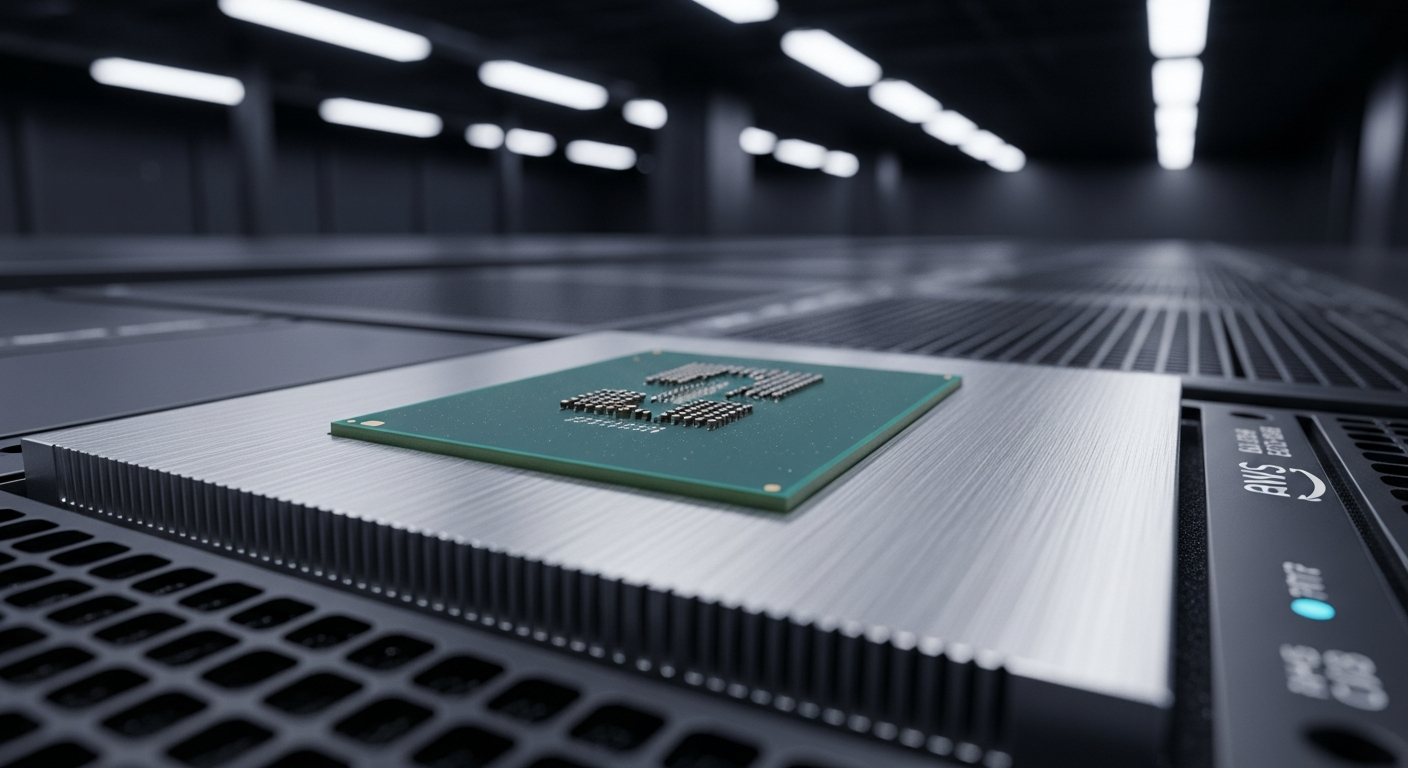

The Strategic Shift to Amazon Trainium chips

The hardware wars have moved beyond mere clock speeds and memory bandwidth. Major AI labs are abandoning the scarcity of public cloud clusters in favor of purpose-built, proprietary silicon stacks. Amazon Trainium chips represent the most significant horizontal shift in this ecosystem.

By controlling the stack from the physical wafer to the orchestration software, AWS is forcing developers to rethink how they build their AI Workflow. This isn’t just about speed; it’s about predictable scaling in an era of volatile compute pricing.

A critical data point from the recent lab analysis reveals that these chips utilize a unique asynchronous memory access pattern, which allows for 40% faster weight updates during the training phase. This technical nuance is exactly why high-demand entities are migrating their massive workloads to this specific hardware.

Everyday User Impact

For the average professional, this hardware evolution translates to faster, more capable digital assistants in your daily tools. When software providers switch to more efficient hardware, the cost to run complex models drops significantly.

This financial relief eventually reaches the end user through cheaper subscription tiers or more frequent model updates. You will likely see your favorite apps performing complex tasks—like video rendering or real-time document analysis—in seconds rather than minutes.

As these models become cheaper to run, the standard for what we expect from basic Automation tools will rise. You are effectively gaining access to enterprise-grade processing power without the enterprise-grade price tag.

ROI for Business

Financial officers are increasingly viewing cloud compute spend as a primary line item that requires active management. Deploying on Amazon Trainium chips provides a predictable cost-per-token model that is shielded from the massive price fluctuations found in the general GPU market.

Business leaders must prioritize architecture agility to leverage this shift. Those who build their pipelines to be hardware-agnostic will find themselves with significant leverage during vendor negotiations.

The transition period may involve a temporary investment in refactoring your inference code. However, the long-term reduction in infrastructure overhead provides a measurable advantage against competitors still locked into legacy GPU-only environments.

Technical Intelligence Sources

High-level decision-making requires direct access to technical documentation and performance benchmarks. The following resources provide the granular detail necessary for evaluating this hardware:

AWS Trainium Documentation: The official technical specification and deployment guide for the Trn1 and Trn2 instances.

Amazon Silicon Performance Reports: Ongoing whitepapers detailing memory throughput and cluster scaling benchmarks available via the official AWS portal.

For further reading on the architectural breakdown of the lab findings, see the original reporting on the Trainium Lab.

Fact-checked and technical review by Joe Kunz April 2, 2026.