Executive Briefing

- Elon Musk is transitioning Tesla and SpaceX from hardware integrators to sovereign silicon producers, aiming to eliminate reliance on external foundries and third-party designers like NVIDIA.

- The move focuses on proprietary 3nm and 2nm architectures specifically optimized for real-time spatial inference, a direct requirement for the scaling of Full Self-Driving (FSD) and Optimus humanoid robotics.

- By internalizing the semiconductor lifecycle, Musk’s ventures aim to bypass the “silicon tax” and supply chain bottlenecks that currently dictate the pace of AI deployment across the automotive and aerospace sectors.

Everyday User Impact

For the average person, this shift means the technology in your driveway and above your head will start evolving at a much faster rate. If you own a Tesla, the car’s ability to navigate complex intersections or sudden road hazards will become smoother and more “human-like” because the computer inside is no longer a general-purpose processor; it is a custom-built brain designed for one specific task. You will notice fewer jerky movements and faster decision-making in autonomous modes.

For Starlink users, this translates to smaller, more power-efficient satellite dishes that can handle higher speeds with less lag. The most visible change, however, will be in the cost and capability of home robotics. By manufacturing his own chips, Musk can lower the price of hardware like the Optimus robot, potentially bringing it closer to the price of a mid-sized sedan rather than a piece of specialized industrial equipment. Your devices will essentially get smarter while using less battery, leading to longer runtimes and more reliable performance without needing a constant connection to a central server.

ROI for Business

The financial logic behind this move is centered on margin expansion and de-risking. Companies currently pay a massive premium for high-end GPUs, often waiting months for shipments that are subject to geopolitical instability. By owning the fabrication process, Tesla and SpaceX can drastically reduce their cost-per-unit for AI compute. This vertical integration allows for a tighter feedback loop between software engineering and hardware design, meaning features can move from prototype to production in weeks rather than years. For investors, this represents a transition from a capital-intensive manufacturing model to a high-margin technology ecosystem. The primary risk lies in the massive upfront capital expenditure required to build and maintain fabrication facilities, but the long-term payoff is a moat that competitors reliant on off-the-shelf silicon cannot easily cross.

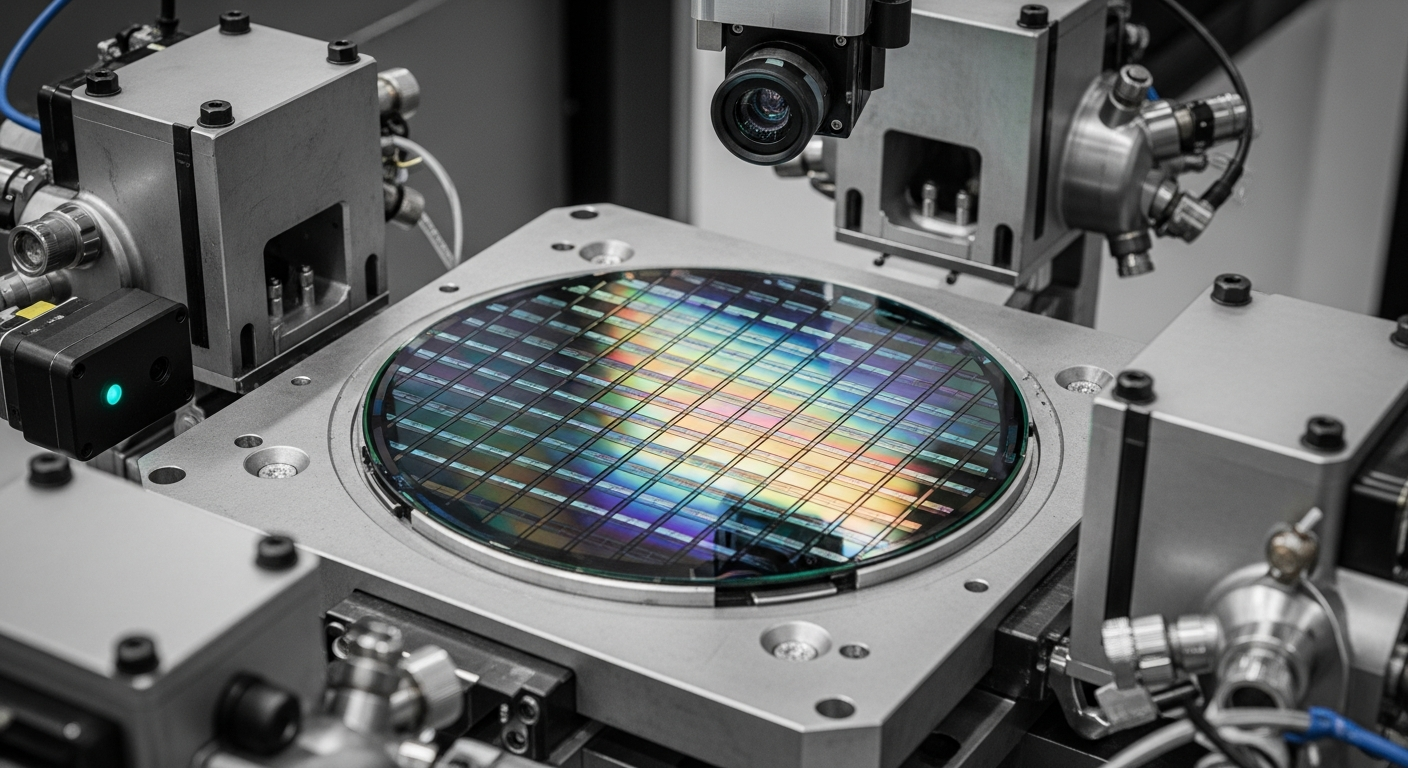

The Technical Shift

The industry is witnessing a move away from general-purpose computing toward Application-Specific Integrated Circuits (ASICs). While NVIDIA’s H100s are excellent for training large language models in massive data centers, they are not optimized for the “edge”—the physical world where cars and robots operate. Musk’s new strategy focuses on “inference at the edge,” where the chip must process gigabytes of video data every second with nearly zero latency and minimal power consumption.

This technical realignment involves a hardware-software co-design. Instead of writing code to fit the limitations of a standard chip, Musk’s engineers are building the silicon to mirror the specific neural network architectures used in Tesla’s “Dojo” and FSD systems. This eliminates wasted computational cycles on tasks the car or robot doesn’t need to perform. Architecturally, this means shifting toward high-bandwidth memory (HBM) integration and proprietary interconnects that allow chips to communicate with one another at speeds that exceed current industry standards. This is not just a manufacturing play; it is a total redesign of how AI interacts with the physical world, prioritizing throughput and efficiency over raw, generalized power.