Executive Briefing

- Amazon is transitioning from a cloud infrastructure provider to a dominant hardware architect, utilizing its proprietary Trainium2 and Trainium3 chips to challenge Nvidia’s market monopoly.

- Strategic partnerships with Anthropic, OpenAI, and Apple signal a massive industry migration toward custom silicon to mitigate the high costs and supply chain volatility of general-purpose GPUs.

- The move toward AWS-native hardware represents a pivotal shift in the AI arms race, prioritizing energy efficiency and specialized interconnects over raw, unoptimized power.

Everyday User Impact

While the physical Trainium chips remain hidden within massive data centers, their influence will be felt in the speed and cost of the digital tools you use daily. When a company like Apple or Anthropic trains its AI models on more efficient hardware, the benefits reach your device in two ways: performance and price. You will notice smarter, more responsive voice assistants and AI-driven photo editing tools that require less processing time. Because Amazon’s custom silicon lowers the astronomical costs of “teaching” these models, the tech industry can avoid passing those expenses to you. This shift makes it more likely that premium AI features will remain affordable or even free, rather than hidden behind rising monthly subscription fees. Essentially, the efficiency of this hardware ensures that the AI in your pocket becomes more capable without becoming more expensive.

ROI for Business

For executive leadership, the “Nvidia tax” has become a significant barrier to maintaining healthy margins in AI development. Amazon’s Trainium offers a strategic exit from this high-cost ecosystem by providing a 30% to 50% improvement in price-performance. For organizations spending millions on compute monthly, this transition directly impacts the bottom line by reclaiming capital that would otherwise be lost to hardware premiums. Beyond immediate cost savings, utilizing AWS-native silicon provides a critical hedge against supply chain instability. Businesses that integrate Trainium into their workflows gain prioritized access to hardware that is not subject to the same global shortages as traditional GPUs. This reliability allows for more predictable scaling and faster time-to-market for AI-driven products, turning compute from a volatile expense into a controllable strategic asset.

The Technical Shift

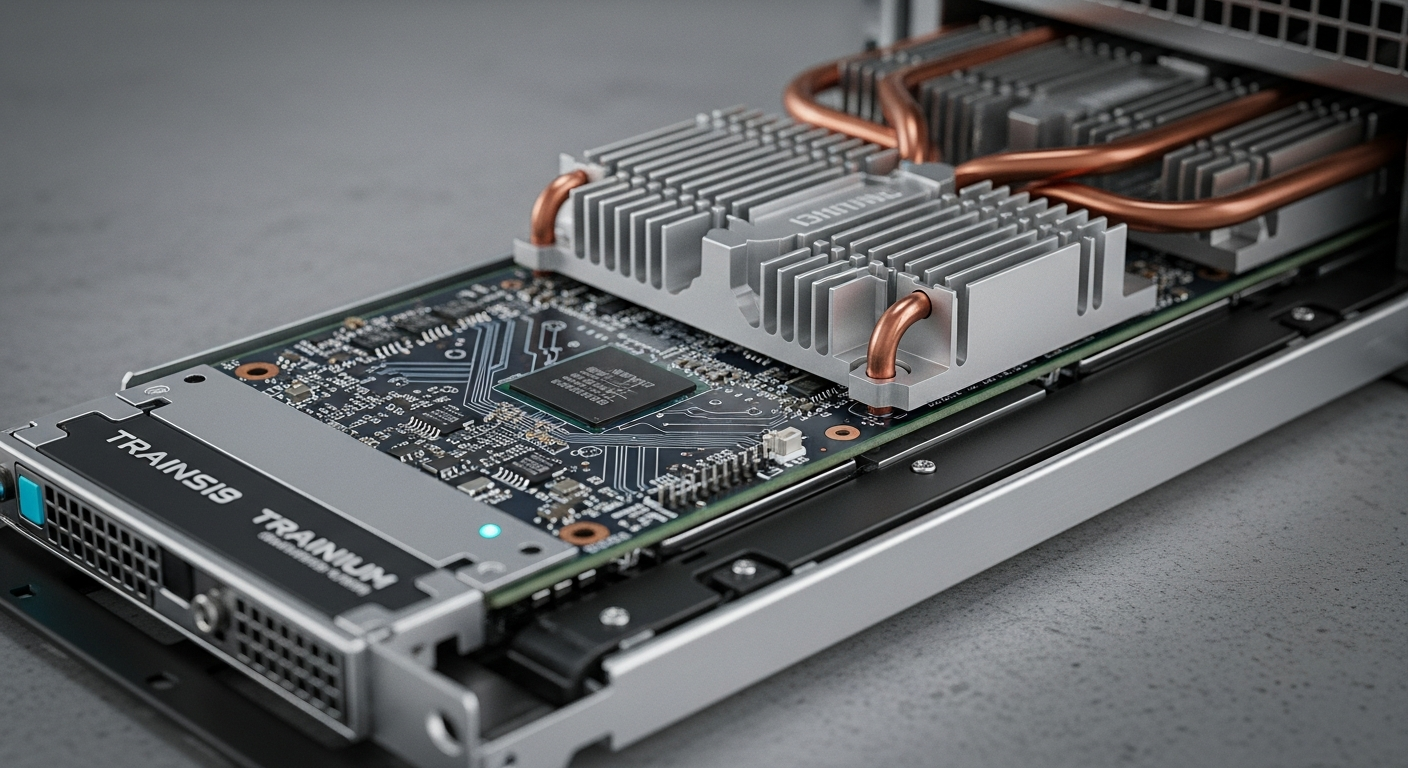

The core of this transformation is the departure from general-purpose Graphics Processing Units (GPUs) toward Application-Specific Integrated Circuits (ASICs). While traditional GPUs are designed for a wide array of mathematical tasks, Trainium is laser-focused on the specific data-flow requirements of transformer models. Amazon has re-engineered the hardware at the silicon level to optimize the “interconnect”—the critical communication pathways that allow thousands of chips to function as a single unit. By controlling the entire stack from the transistor to the data center cooling system, Amazon removes the overhead and bottlenecks found in third-party hardware. The focus is no longer on raw clock speeds alone; instead, the priority is “performance-per-watt.” This architectural specialization allows for much higher density in data centers, enabling more complex model training with a significantly smaller energy footprint. This is a shift from brute-force computing to precision-engineered intelligence infrastructure.

Automate Your AI Operations

This entire newsroom is fully automated. Stop manually coding API connections and scale your enterprise AI deployments visually.

Start Building for Free →