Executive Briefing

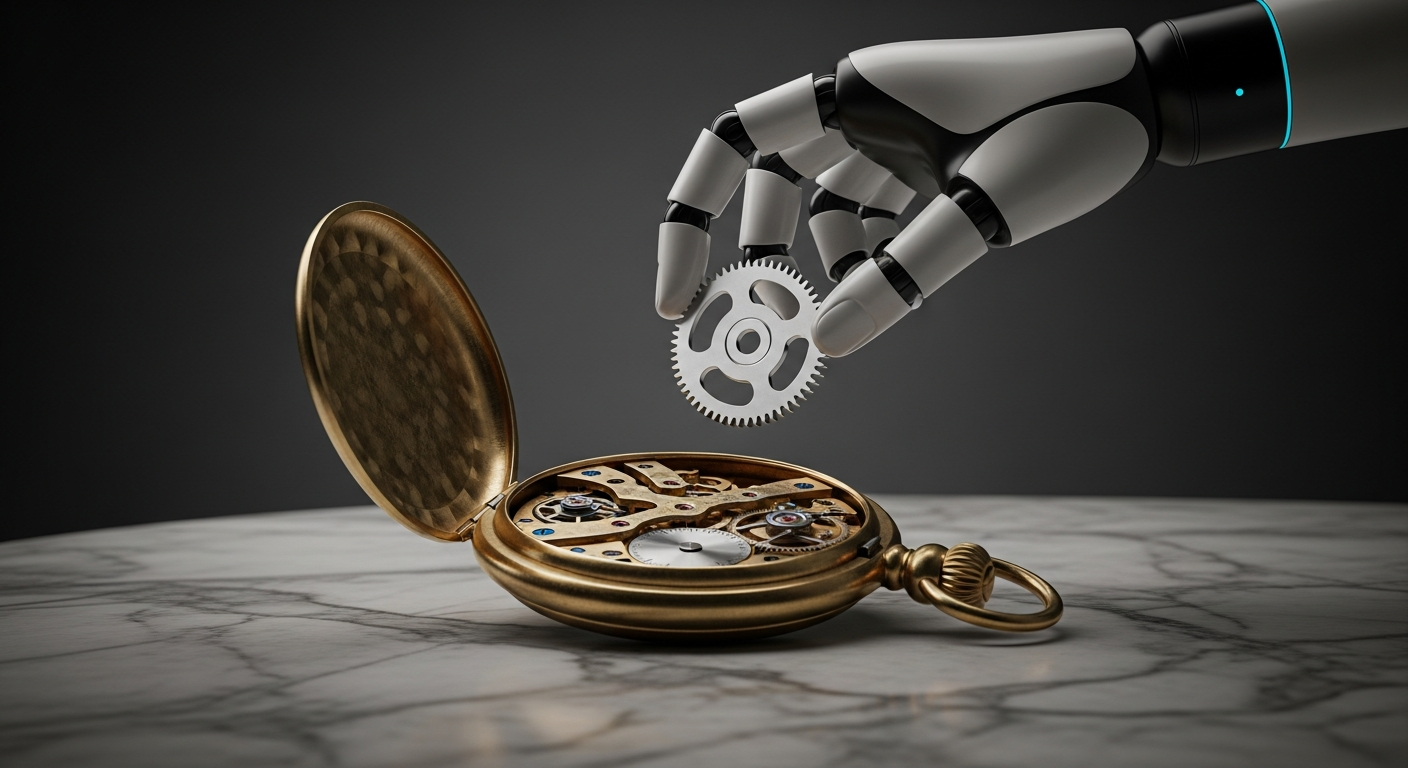

- The transition from OpenAI’s GPT-4 to the “o1” (Strawberry) reasoning series marks a pivot from rapid pattern matching to “System 2” deliberate thinking, prioritizing logical accuracy over response speed.

- New architectural frameworks utilize inference-time compute, a process where the model spends extra processing power to self-correct and iterate through internal “chains of thought” before generating a final answer.

- This shift effectively minimizes the “hallucination gap” in high-stakes fields like mathematics, legal analysis, and software engineering, where one logical error renders the entire output useless.

Everyday User Impact

For the average person, the frustration of “babysitting” an AI is about to evaporate. Most users currently spend ten minutes re-prompting an AI because it failed to follow a complex set of instructions or made a simple math error. The shift to reasoning-based AI means the model now acts like a meticulous researcher rather than a fast-talking assistant. If you ask your phone to plan a three-week multi-city trip while balancing a specific budget, flight times, and dietary restrictions, the AI will no longer just guess a plausible itinerary. Instead, it pauses to verify every connection and constraint internally.

This means you spend less time editing and more time executing. Whether you are debugging a home automation script or trying to understand a complex medical report, the interaction moves away from a “chat” and toward a “solution.” You will experience a slight delay in getting an answer—perhaps ten to thirty seconds—but the result will be a finished product that does not require a second or third look. The era of the “second draft” is being replaced by a more reliable “first-best” response.

ROI for Business

For the enterprise, the value proposition moves from “content volume” to “verification labor reduction.” Traditionally, the hidden cost of AI adoption has been the high salary of a human expert required to audit every word the AI produces. Reasoning models fundamentally disrupt this cost structure by performing their own internal QA. For a mid-sized firm, integrating these models into a development or legal pipeline can reduce technical debt and audit hours by an estimated 30% to 50%. The financial risk is no longer the inaccuracy of the model, but the cost of the tokens; reasoning models are significantly more expensive to run. Strategic leaders must now decide which workflows justify the “premium thought” cost of a reasoning model versus where a cheaper, faster model remains sufficient. This is a shift from measuring AI by words-per-minute to measuring it by accuracy-per-dollar.

Automate Your AI Operations

This entire newsroom is fully automated. Stop manually coding API connections and scale your enterprise AI deployments visually.

Start Building for Free →The Technical Shift

The core change happening behind the scenes is a move away from the “scaling laws” of pure data volume toward “inference-time compute.” In the past, making an AI smarter meant feeding it more of the internet during its training phase. Now, engineers are finding that letting a model “think” for longer during the actual prompt phase yields better results than simply adding more parameters. By using reinforcement learning to reward the model for successful logical steps, the AI develops a private chain of thought. It tests various hypotheses, discards the ones that lead to contradictions, and only presents the verified path to the user.

This mimics human cognition more closely than any previous iteration. When a human solves a complex puzzle, they do not just shout the first word that comes to mind; they visualize the steps. By forcing the AI to show its work (even if that work is hidden from the final UI), developers have solved the “black box” problem of logic. The model is no longer just predicting the next most likely word; it is navigating a tree of possibilities and pruning the branches that fail to meet the user’s criteria. This represents the most significant architectural evolution since the original transformer paper, moving the industry from “generative” AI to “agentic” reasoning.