The Action Pivot: Why AI is Stepping Outside the Chatbox

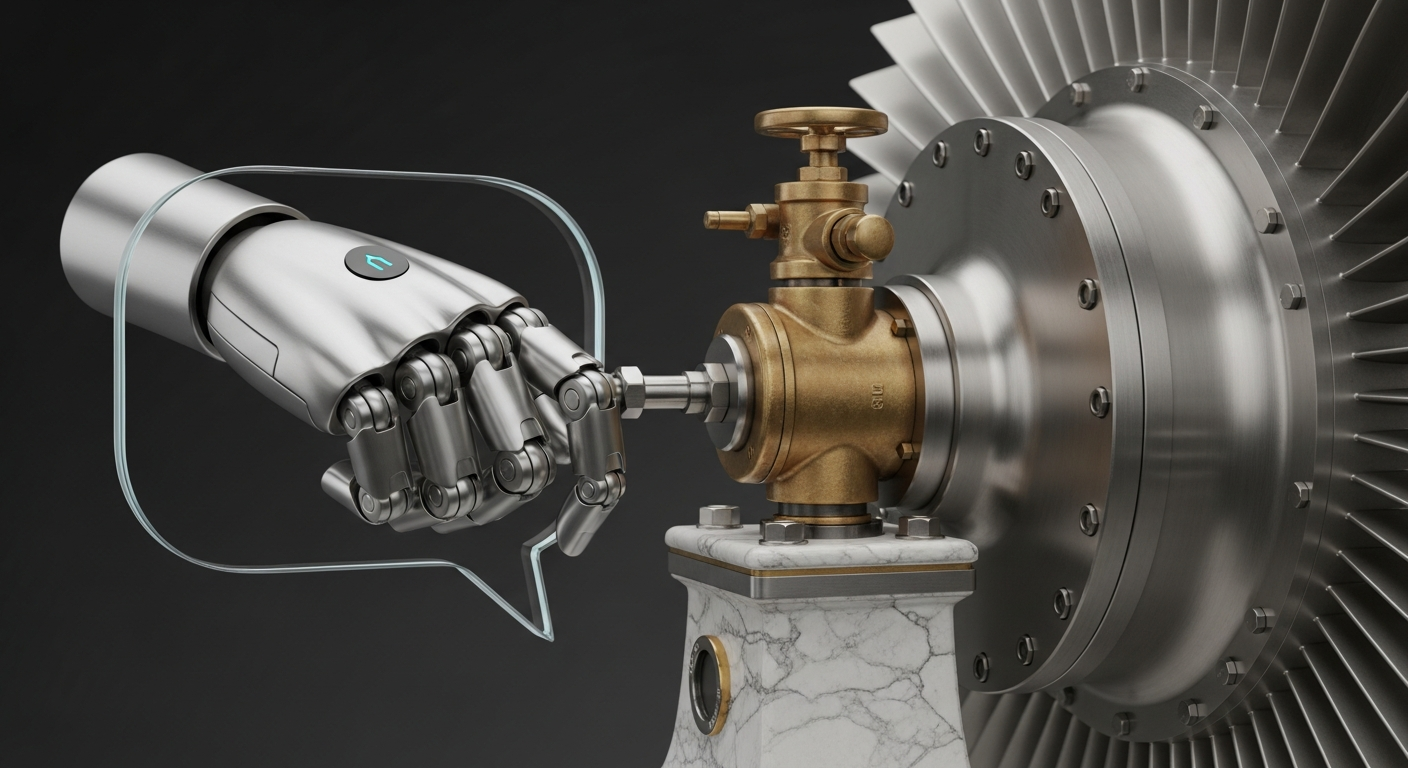

The first era of generative AI focused almost exclusively on conversational fluency. We learned how to talk to machines, and they learned how to mirror human syntax. Now, the industry is entering a second, more consequential phase: agency. Instead of simply generating a list of steps for a human to follow, AI is being granted the authority to execute those steps across third-party software and digital environments. This transition marks the end of the “chatbot” era and the beginning of the “agentic” era.

- The primary development focus has shifted from increasing model parameters to perfecting Large Action Models (LAMs) that can navigate user interfaces like a human.

- Strategic partnerships are forming between AI labs and enterprise software providers to create secure sandboxes where agents can operate without manual oversight.

- Safety concerns are migrating from output bias to operational risk, necessitating new verification layers that require a human-in-the-loop for financial or high-stakes transactions.

Everyday User Impact

For the average user, this shift moves the AI from a research assistant to a digital proxy. Today, if you want to plan a trip, you use AI to find flights, then you manually navigate to a website, enter your credit card information, and book the ticket. In the near future, your device will handle the entire transaction from end to end. You will provide a single spoken instruction, and the AI will navigate through various apps, compare real-time prices, and present a finished itinerary for a single-tap approval.

This eliminates the friction of “swivel-chair” labor—the tedious process of copying data between browser tabs or manually updating spreadsheets. You will spend significantly less time on administrative chores such as scheduling doctor appointments, disputing utility bills, or organizing digital files. The technology essentially acts as a personal coordinator that understands how your software works as well as you do, allowing you to focus on the final outcome rather than the navigation required to get there.

Automate Your AI Operations

This entire newsroom is fully automated. Stop manually coding API connections and scale your enterprise AI deployments visually.

Start Building for Free →ROI for Business

For organizations, the value proposition moves from simple content creation to end-to-end process automation. The financial upside is found in the drastic reduction of labor hours spent on repetitive data entry and cross-platform synchronization. Instead of employing a team to manually reconcile invoices against bank statements, a single agentic workflow can perform the task with higher accuracy and at a fraction of the cost. The immediate return on investment is measured in “time-to-completion” for complex workflows, effectively transforming traditional overhead costs into scalable digital assets. However, this shift requires a new approach to risk management, as companies must now secure the “identity” of the AI agents to prevent automated unauthorized actions.

The Technical Shift

Behind the scenes, the industry is moving away from static text prediction and toward dynamic state management. Traditional models predict the next word; Agentic AI predicts the next logical action within a software environment. This requires a transition to a recursive feedback architecture. When an agent encounters an unexpected error—such as a changed website layout or a timed-out session—it must possess the reasoning capabilities to self-correct and find an alternative path without crashing the workflow.

This technical evolution involves integrating computer vision with reasoning engines, allowing the model to “see” a screen and map pixel coordinates to functional buttons. Developers are currently focused on solving the “long-horizon” problem, where an AI must maintain a specific goal over hundreds of small, sequential steps without losing focus or drifting into unintended behaviors. By shifting from output-based training to reward-based reinforcement learning, engineers are teaching models to prioritize the successful completion of a task over the mere generation of a plausible response.