Executive Briefing

- Robotics is transitioning from rigid warehouse environments to unstructured outdoor settings, mastering the manipulation of deformable materials like snow through advanced physical reasoning.

- New thermal management architectures are solving the sub-zero performance gap, allowing actuators and lithium-ion systems to maintain peak torque and battery longevity in extreme cold.

- The integration of high-resolution tactile feedback loops allows machines to sense material density in real-time, preventing them from losing grip or crushing objects that change shape under pressure.

Everyday User Impact

Imagine waking up to a heavy snowstorm and never having to touch a shovel or salt your driveway again. This shift in robotics moves these machines out of controlled factory floors and into your backyard. It is not just about the novelty of a robot building a snowman; it is about a machine that finally understands how to navigate a slippery sidewalk, clear a path for your car, or inspect outdoor pipes during a deep freeze. You will soon treat your household robot as a rugged appliance rather than a fragile gadget. This technology means you will reclaim hours of physical labor every winter and eliminate the physical risks associated with clearing heavy snow or working in freezing temperatures. Your interaction with tech is moving from screens to physical assistance that handles the most grueling aspects of home maintenance.

ROI for Business

For enterprises, the deployment of robots capable of functioning in hostile, unstructured environments represents a massive reduction in seasonal liability and labor costs. Companies managing sprawling infrastructure—such as solar farms, rail lines, or telecommunications arrays—currently face significant downtime and safety risks when ice and snow accumulate. Deploying autonomous units capable of physical reasoning allows for the maintenance of assets without human exposure to hazardous conditions. The financial value is found in operational continuity; a robot that requires no heating breaks or safety harnesses can maintain outdoor assets 24/7 during weather events that typically paralyze local commerce. Early adopters are shifting their budgets from experimental research to operational integration, viewing these ruggedized units as a necessary hedge against labor shortages and unpredictable climate patterns.

The Technical Shift

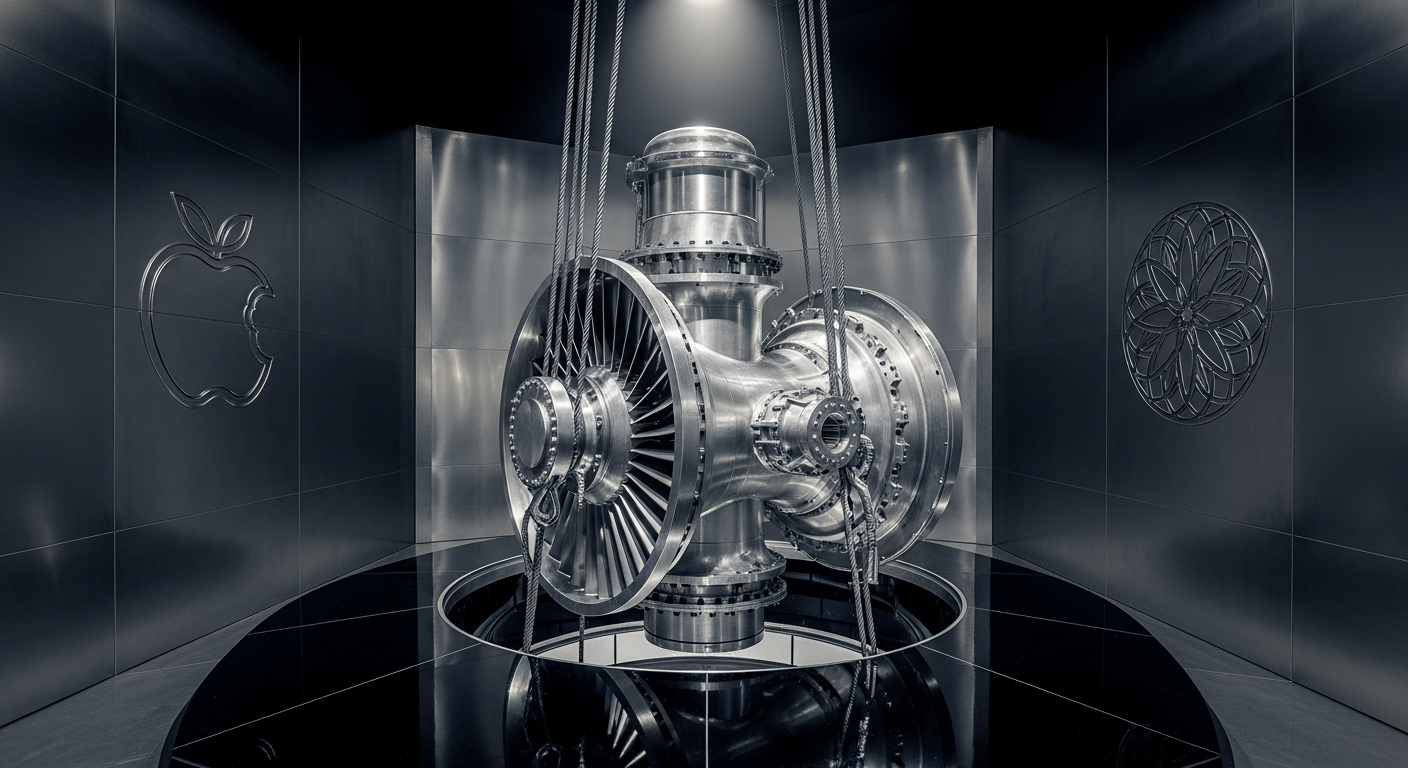

The transition from moving static boxes to manipulating snow hinges on a breakthrough in deformable object manipulation. Traditional robotics excels at rigid geometry where every edge is defined and unchanging. Snow, however, changes density, weight, and state as it is handled. The current technical leap involves pairing Vision-Language-Action (VLA) models with high-fidelity haptic sensors. These systems do not just identify an object visually; they predict its structural integrity based on resistance. Behind the scenes, engineers have implemented internal heat-recycling loops. These loops capture the thermal energy generated by the robot’s onboard processors and reroute it to the mechanical joints and battery housing. This prevents the “seizing” effect common in traditional hydraulics and electric motors exposed to the cold. By moving away from pre-programmed paths to reactive, sensor-heavy physical intuition, the industry is effectively ending the “clean room” era of robotics and entering an age of environmental autonomy.