Executive Briefing

- Amazon’s Trainium-2 silicon has transitioned from a secondary experiment to a primary production engine, securing multi-billion dollar commitments from Anthropic and infrastructure partnerships with OpenAI.

- The strategic pivot toward custom ASICs (Application-Specific Integrated Circuits) allows AWS to undercut Nvidia-based compute costs by 40% to 50%, fundamentally altering the economics of model training.

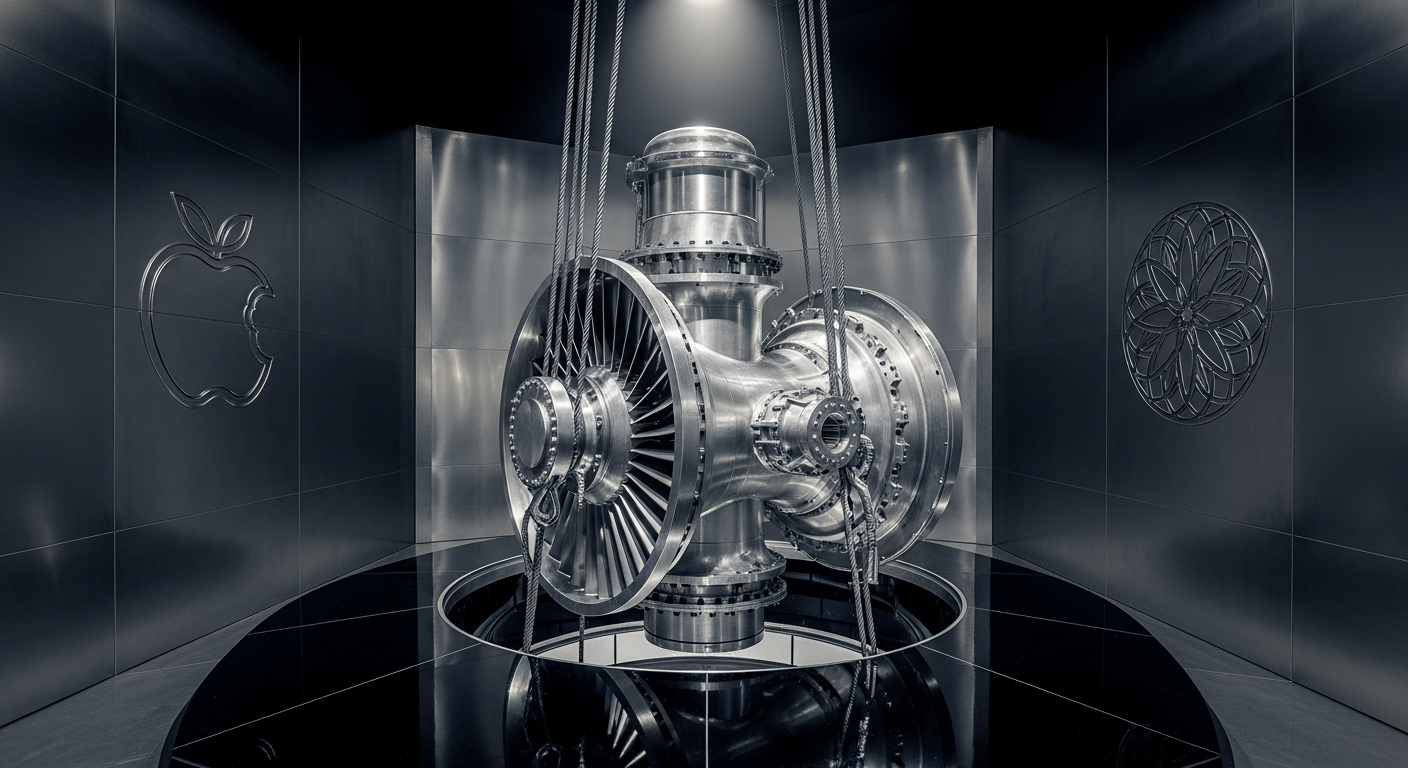

- Apple’s integration of Trainium into its Private Cloud Compute architecture validates the hardware’s capability to handle high-security, high-scale consumer AI processing outside of its own data centers.

The Technical Shift: Beyond General-Purpose Silicon

For the last decade, the AI industry relied on Nvidia’s general-purpose GPUs to do the heavy lifting. While effective, these chips carry the “tax” of being designed for everything from gaming to crypto mining. Amazon’s Trainium-2 represents a move toward hyper-specialization. By stripping away non-essential functions, Amazon created a chip dedicated solely to the mathematical weights and measures required for transformer models.

The real breakthrough isn’t just the individual chip speed; it is the “UltraCluster” architecture. Amazon is now networking over 100,000 Trainium-2 chips into a single massive supercomputer. This scale is achieved through custom-built interconnects—the digital highways that allow chips to talk to each other. When chips communicate faster, models train in weeks instead of months. This vertical integration—owning the chip, the server, and the data center—removes the bottlenecks that currently plague providers who simply buy off-the-shelf hardware.

Everyday User Impact: Faster, Cheaper, and More Private AI

While silicon architecture feels distant from your smartphone screen, this shift directly dictates how you interact with technology. Because Amazon is slashing the cost of running these massive brains, you will see a slowdown in the “subscription fatigue” currently hitting AI apps. When it costs less for a company like Anthropic to run Claude, they can offer more features in their free tiers or keep monthly prices stable.

Automate Your AI Operations

This entire newsroom is fully automated. Stop manually coding API connections and scale your enterprise AI deployments visually.

Start Building for Free →Additionally, your digital assistants are about to get a major intelligence boost without sacrificing your privacy. Apple’s decision to use Amazon’s hardware for its Private Cloud Compute means that when your iPhone sends a complex request to the cloud, it is being processed on hardware designed to be “stateless”—meaning it handles the task and immediately forgets the data. You get the power of a supercomputer with the privacy of a locked drawer. Finally, the sheer speed of this new hardware means that the gap between “new AI research” and “new feature on your phone” will shrink from years to mere months.

ROI for Business: Breaking the Nvidia Dependency

For the C-suite, the emergence of Trainium-2 provides a critical hedge against the Nvidia supply chain monopoly. Relying on a single vendor for compute creates a massive “concentration risk” that can halt product roadmaps if hardware shortages occur. By moving workloads to Trainium, enterprises can realize a direct 40% improvement in price-to-performance ratios. This isn’t just a marginal gain; it is the difference between a project being commercially viable or a total loss. Companies can now train larger models with the same budget or run existing models at half the operational cost, freeing up capital for further R&D or direct bottom-line profit.

The Investigative Outlook

Amazon is no longer content being a landlord for other people’s hardware. By building their own silicon, they have seized control of the most valuable resource in the modern economy: compute cycles. The fact that OpenAI—Microsoft’s closest partner—is reportedly looking at AWS infrastructure signals a massive shift in the power balance of the “Cloud Wars.” The era of “one-size-fits-all” AI hardware is ending, replaced by a bespoke infrastructure where the software and the silicon are designed in the same room.