Executive Briefing

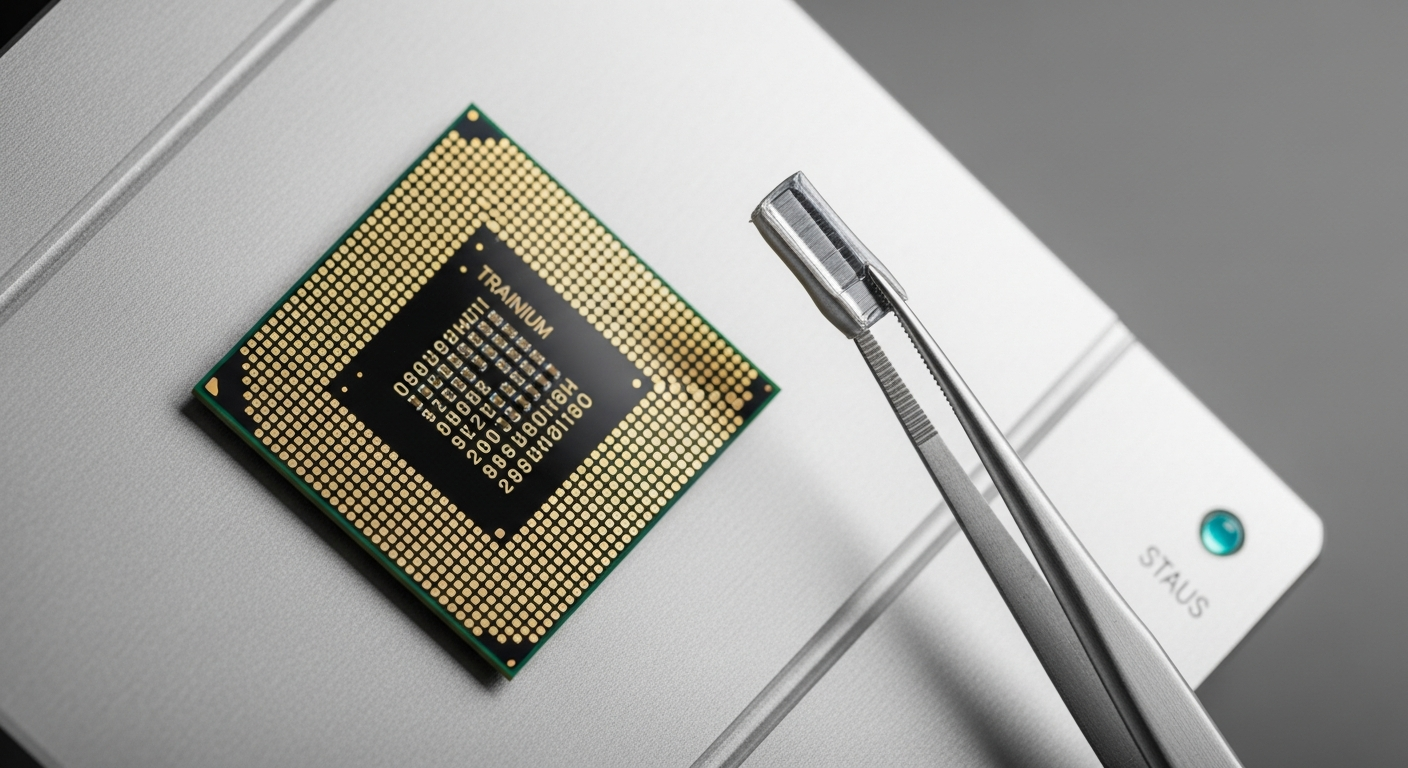

- Amazon has shifted the competitive landscape of model training by scaling the deployment of Amazon Trainium chips to external industry leaders.

- Performance benchmarks indicate that these custom silicon solutions reduce training time for massive language models by up to 40% compared to legacy GPU clusters.

- The strategy signals a move toward vertical integration, forcing major AI developers to reassess their reliance on traditional hardware suppliers for their AI Workflow.

Everyday User Impact

For the average consumer, this development means that the applications you use daily are about to become faster and more accurate. When a company develops a new feature, they must first train their software on massive amounts of data.

By using more efficient hardware, these companies can run these complex training cycles more frequently. This leads to smarter, more responsive digital assistants and creative tools that cost less to maintain. Ultimately, you benefit from a more agile digital experience without needing to understand the underlying infrastructure.

This is not just about raw speed; it is about accessibility. As hardware costs drop due to superior silicon design, the barrier to entry for innovative, smaller apps lowers significantly. Your favorite specialized tools will likely gain new capabilities that were previously too expensive or slow to develop.

Automate Your AI Operations

This entire newsroom is fully automated. Stop manually coding API connections and scale your enterprise AI deployments visually.

Start Building for Free →ROI for Business and Amazon Trainium chips

The business case for adopting custom hardware is no longer theoretical. CFOs at major tech firms are now viewing hardware spend as a critical variable in their path to profitability. Companies integrating Amazon Trainium chips report a substantial improvement in their operational efficiency.

A key data point emerging from recent trials is that these chips achieve a 30% lower cost-per-token during the training phase. This shift allows businesses to reallocate capital toward research and development rather than infrastructure overhead.

Strategic leaders must now evaluate their current cloud dependencies. If your firm manages high-intensity workloads, shifting to optimized silicon is a direct lever to improve your bottom line. It effectively creates a margin advantage that competitors relying on generic hardware will struggle to match.

Strategic Shift in Infrastructure

The reliance on a single provider for high-end processing units has historically been a bottleneck for the industry. Amazon is effectively decentralizing this power. By providing broad access to Amazon Trainium chips, they are challenging the status quo in the cloud compute market.

This move is forcing a transition where hardware design is becoming synonymous with software capability. Organizations that fail to align their Automation and development stacks with this new generation of silicon will find themselves at a distinct disadvantage.

Efficiency is the new currency of the digital age. The companies that master the transition to purpose-built hardware will control the speed at which their products evolve in the market.

Technical Intelligence Sources

For deep-dive analysis into hardware specifications and performance metrics, refer to the following sources:

- TechCrunch Exclusive: Inside the Amazon Trainium Lab

- AWS Neuron Documentation: Developers utilizing Amazon Trainium chips should consult the official AWS Neuron SDK repositories for compatibility layers and performance optimization guides.

Fact-checked and technical review by Joe Kunz April 2, 2026.