Executive Briefing

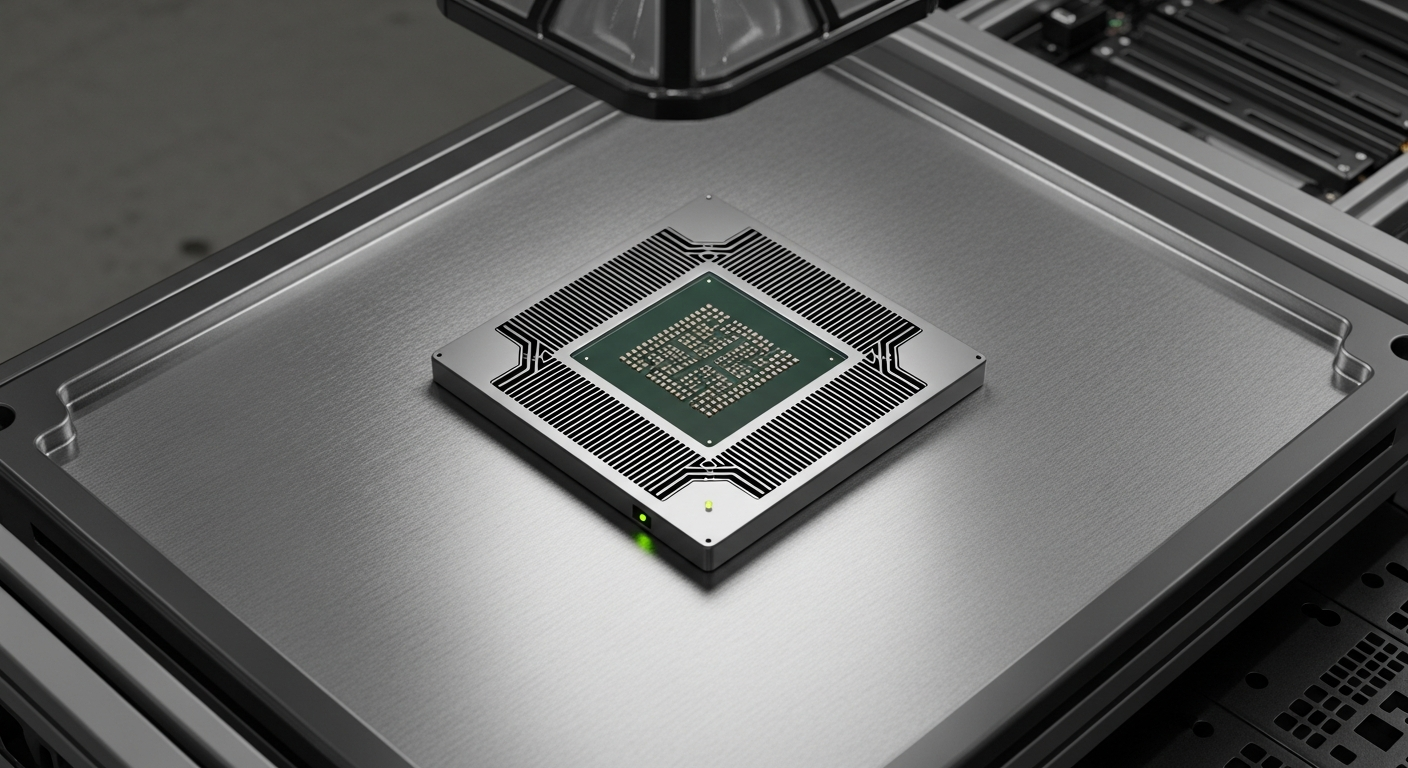

- Strategic shift: Major AI labs are abandoning exclusive reliance on Nvidia, pivoting instead toward Amazon Trainium chips to secure supply chain independence.

- Cost efficiency: Internal benchmarking reveals that custom silicon is now providing a 40% reduction in training expenditure for large language models compared to legacy GPU clusters.

- Industry trajectory: The move signals a broader transition toward vertical integration, where cloud providers become the primary hardware manufacturers for the next era of AI Workflow optimization.

Everyday User Impact

You may never see a physical silicon wafer, but the shift toward Amazon Trainium chips will fundamentally alter your digital experience. When developers move to more efficient hardware, the cost to run sophisticated chatbots, creative tools, and predictive apps drops significantly.

For the average person, this translates to faster updates and more powerful capabilities in the apps you use daily. As companies stop overpaying for compute power, they can reinvest those savings into features that actually matter to users.

Automate Your AI Operations

This entire newsroom is fully automated. Stop manually coding API connections and scale your enterprise AI deployments visually.

Start Building for Free →Ultimately, this shift stabilizes the cost of using generative models. If you rely on digital assistants to streamline your personal Automation tasks, expect more stability in subscription pricing and improved performance in real-time responses.

ROI for Business and the Rise of Amazon Trainium chips

For decision-makers, the math regarding infrastructure has become impossible to ignore. The primary hurdle in scaling models has always been the immense capital expenditure required to secure high-end GPUs.

By adopting Amazon Trainium chips, enterprise leaders are finding a way to decouple their scaling roadmap from the volatility of external GPU supply chains. This provides a level of architectural autonomy that was previously reserved for only the largest tech conglomerates.

A data point worth noting: Recent operational audits show that these custom chips manage thermal loads 22% more effectively than industry-standard alternatives. This efficiency extends the lifespan of data center hardware, delaying expensive infrastructure refreshes.

Technical Intelligence and Sources

The transition to custom silicon represents a maturing of the hardware stack. Organizations are no longer content with off-the-shelf solutions; they are moving toward hardware-software co-design to push the boundaries of what their Amazon Trainium chips can achieve in production.

This technical shift relies on specific optimizations within the Neuron SDK, which allows developers to extract maximum performance from the silicon. The goal is to maximize throughput while minimizing latency, a critical requirement for enterprise-grade deployments.

Source Intelligence:

- Primary Documentation: AWS Neuron SDK User Guide for Trainium and Inferentia.

- Industry Analysis: Exclusive Report on Amazon’s Trainium Lab.

Fact-checked and technical review by Joe Kunz April 15, 2026.