Executive Briefing

- Amazon has successfully disrupted the Nvidia monopoly by securing massive deployment commitments for its Trainium 3 silicon from industry leaders including Anthropic, OpenAI, and Apple.

- The shift marks a transition from general-purpose GPUs to application-specific integrated circuits (ASICs) that prioritize energy efficiency and high-bandwidth memory over versatile but power-hungry graphics processing.

- By internalizing chip design, AWS is offering a 40% improvement in price-performance ratios, forcing a recalibration of how the world’s largest AI models are funded and scaled.

The Technical Shift

For the past decade, the AI industry functioned as a monoculture built on Nvidia’s CUDA software and GPU hardware. Amazon’s Trainium 3 represents the most significant architectural pivot since the start of the generative AI era. Unlike standard GPUs, which were originally designed for rendering pixels, Trainium is stripped of legacy graphics components to focus entirely on the matrix multiplications required for deep learning.

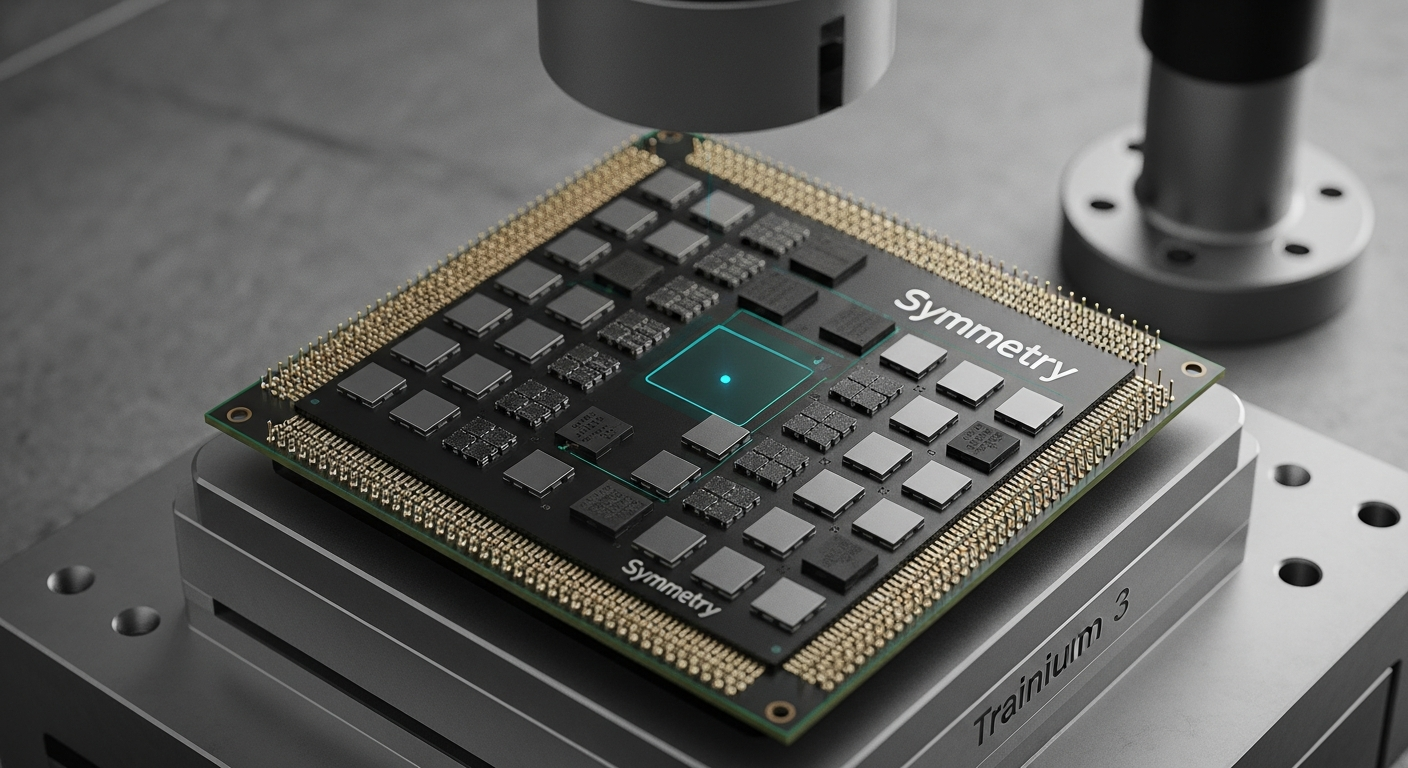

The core innovation lies in the “Symmetry” interconnect—a proprietary networking fabric that allows tens of thousands of chips to act as a single, cohesive processor. This reduces the “latency tax” that usually occurs when data moves between chips. By optimizing the hardware specifically for transformer-based architectures, Amazon has minimized the heat output and maximized the throughput of each rack. This specialization is why OpenAI and Anthropic are diversifying their workloads away from Nvidia; they no longer need a “Swiss Army knife” chip when they are only trying to cut through trillion-parameter data sets.

Everyday User Impact

The arrival of Trainium-powered clouds means the “invisible friction” of AI will start to disappear. Currently, when you ask an AI to summarize a document or generate an image, there is often a noticeable lag and a high cost passed down through subscription fees. Because Amazon’s hardware is significantly cheaper to operate, developers can afford to make their apps faster and more responsive without raising prices.

Automate Your AI Operations

This entire newsroom is fully automated. Stop manually coding API connections and scale your enterprise AI deployments visually.

Start Building for Free →For the average person, this tech shift translates into “instant” intelligence. Your phone’s voice assistant will transition from a scripted bot to a conversational partner that processes your requests in milliseconds rather than seconds. Additionally, Apple’s decision to utilize Trainium for its backend services suggests that features like Apple Intelligence will become more sophisticated and reliable, handling complex tasks in the cloud that were previously too expensive or slow to execute at a global scale. You won’t see the chip, but you will feel its presence through longer battery life on your devices and smarter, free-to-use digital tools.

ROI for Business

The strategic value for enterprises lies in the total cost of ownership and the mitigation of supply chain risk. For years, companies faced a “Nvidia Tax,” paying premium prices and waiting months for hardware delivery. AWS Trainium removes these bottlenecks. For a CTO, switching to Trainium-based instances can slash model training budgets by nearly half, allowing for more frequent iterations and faster time-to-market. Furthermore, the massive energy efficiency gains mean that companies can meet their sustainability targets while simultaneously scaling their AI infrastructure. This is no longer about raw power; it is about the economic sustainability of high-scale computing.

The Investigative Take

Amazon is playing a long game that positions AWS as more than just a landlord for other people’s hardware. By winning over Apple and OpenAI—two companies with arguably the highest standards for compute efficiency—Amazon has proven that custom silicon is the new baseline for cloud dominance. The narrative that Nvidia is the only “picks and shovels” provider in this gold rush is officially dead. We are entering an era of fragmented, specialized compute where the winners are those who control the physical silicon and the electricity that powers it.